Banks and insurers have spent years deploying AI across their customer experience operations. Chatbots, voice automation, agent-assist tools, workflow triggers — most large enterprises have at least one of these running at scale.

The investment has been real. The results, less so.

Some interactions are faster. A few processes run more smoothly. But the fundamental experience, the one customers actually feel hasn't changed the way anyone expected it to.

Here's the uncomfortable truth: technology isn't the problem. The way organizations are deploying it is.

Where Things Stand Today

The technical case for AI in customer experience is stronger than ever. Today's systems understand what customers are asking, handle back-and-forth conversations, and connect with enterprise systems far more reliably than they did even two years ago. Speed is no longer an issue. Neither is language understanding.

In short, AI is ready. The question is whether the organizations using it are.

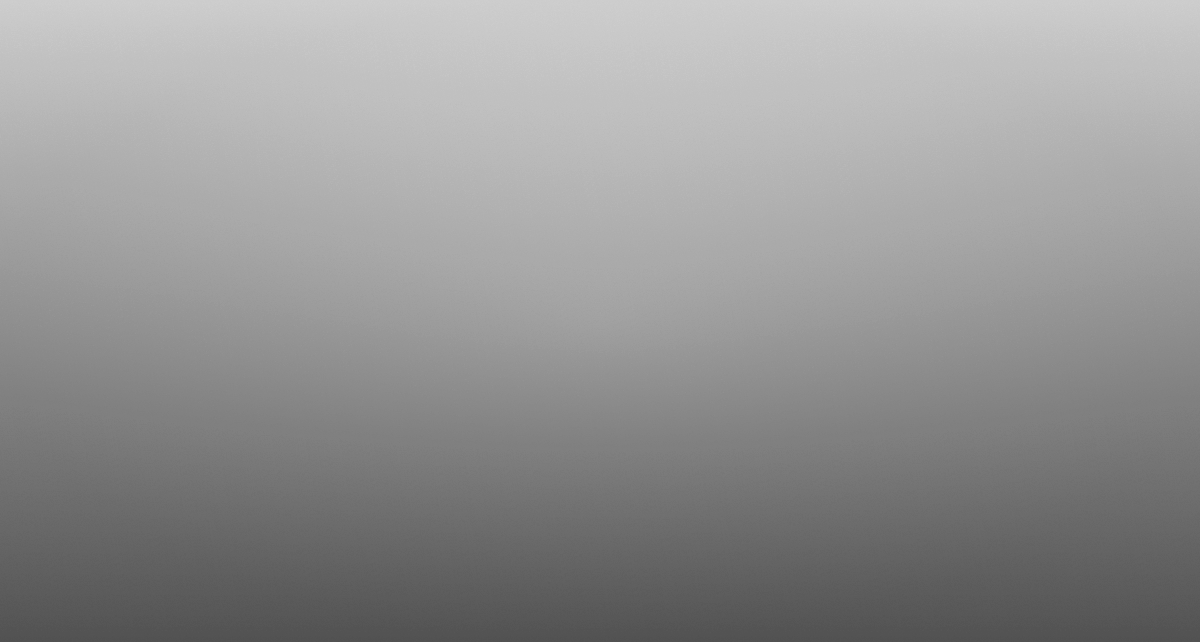

Because despite the maturity of the technology, a large number of deployments still underperform. A chatbot that handles simple questions but breaks down the moment something slightly unusual comes up. A voice system that can take the volume but loses the thread mid-conversation. These aren't random failures, they're signs of a deeper problem in how the deployment was designed.

Why Most AI Deployments Don't Deliver

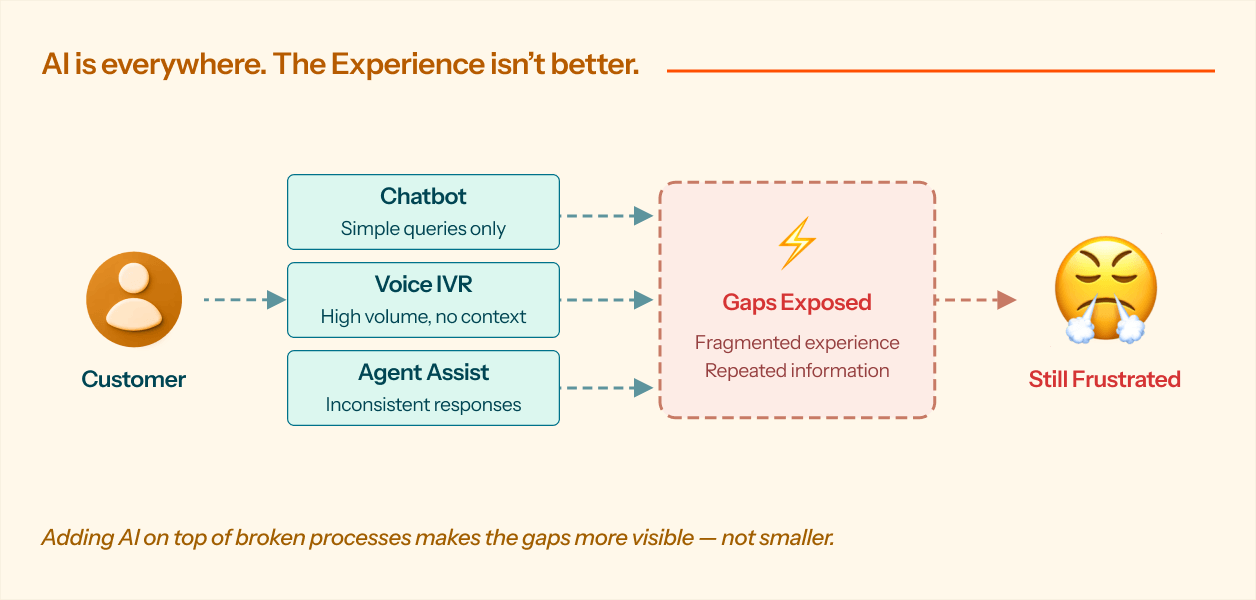

The issue isn't which AI tool an organization picks. It's the order in which decisions get made.

In most cases, the conversation starts with "we need a chatbot" or "let's automate inbound calls" before anyone has clearly defined what the actual problem is. The tool gets chosen. Then it gets built on top of whatever processes already exist. And those processes, more often than not, are already broken.

AI doesn't fix a broken process. It makes the cracks more visible.

Three failure patterns show up again and again:

What the Better Approach Looks Like

Organizations that are genuinely moving the needle on customer experience aren't using different technology. They're using a different starting point.

Instead of beginning with tools, they begin with the customer journey.

They map out specific moments onboarding, renewals, claims, service escalations and ask hard questions. Where do customers get stuck? Where are agents spending time on work that doesn't require human judgment? Where does information break down between teams? Only then does AI enter the picture, built into a redesigned process rather than bolted onto an existing one.

Three things consistently separate these deployments from the rest:

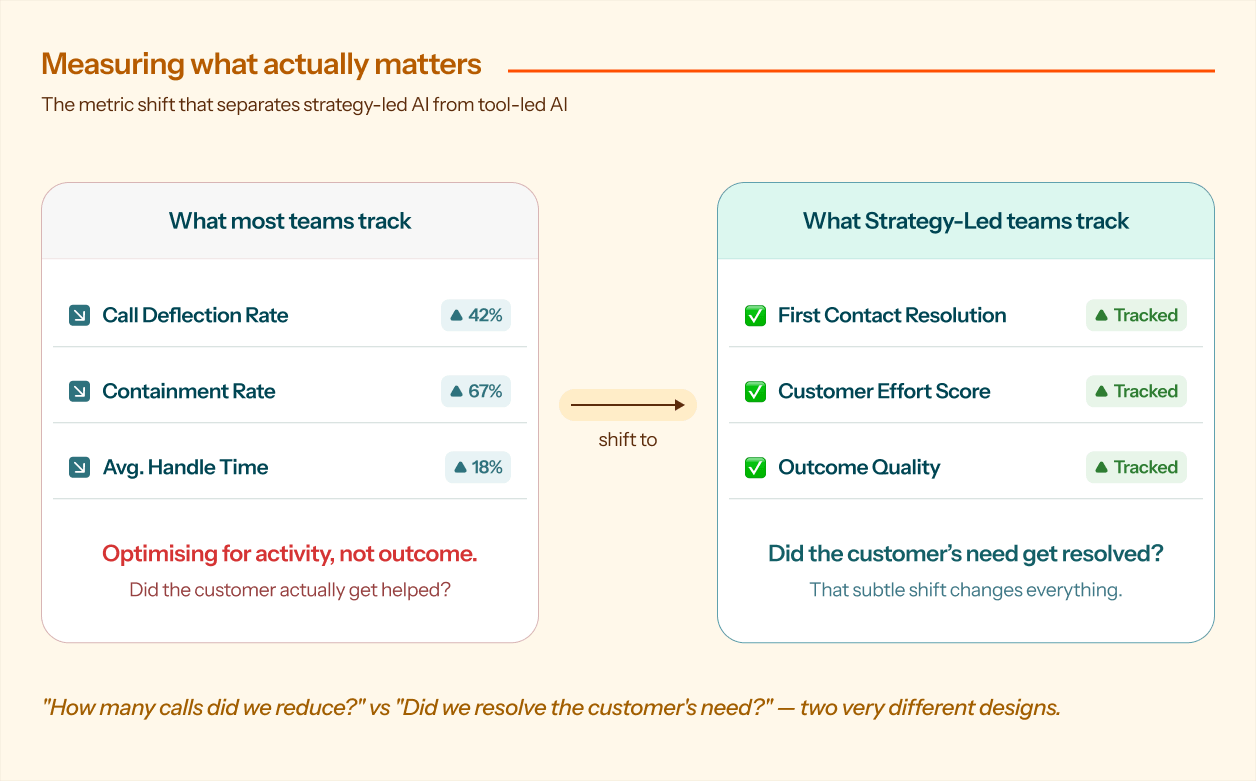

The measure of success isn't whether the AI replied, it's whether the customer's problem was actually solved. That shift changes how every part of the system gets built.

When AI has access to CRM data, policy platforms, and customer history, it can have a real conversation. It knows who the customer is, where they are in their journey, and what they likely need next. That's the difference between an AI that answers questions and one that actually helps.

Resolution rates, customer effort scores, and downstream behavior tell a more honest story than call deflection metrics alone. If the goal is better outcomes, the measurement has to reflect that.

Why This Matters More in Banking and Insurance

The stakes are higher in BFSI than in most industries.

Customers in banking and insurance aren't usually calling about something trivial. They're applying for a loan. Filing a claim after something went wrong. Flagging a security concern on their account. These are high-stress, high-stakes moments and how an organization shows up in those moments directly shapes whether customers trust them.

When AI deployments are unstrategic, the damage is real. Customers get partial answers, repeat themselves across multiple touchpoints, and get transferred before anyone actually resolves their issue. Behind the scenes, operations teams are still carrying the same load because now they're also handling AI-generated exceptions.

When the deployment is strategy-led, the difference is clear. Interactions become more consistent. Problems get resolved in fewer steps. Frontline teams spend their time on work that actually needs a human. And compliance and audit trails hold up, because the system was designed with structured decision flows from the start.

The experience gets better not because more is automated, but because more is aligned.

The Bottom Line

The technology is there. AI systems today are capable, reliable, and ready to integrate with enterprise infrastructure.

What's still missing in most organizations is a clear strategy for what they're actually trying to achieve.

The institutions that will pull ahead aren't the ones with the most sophisticated AI. They're the ones that took the time to define the problem first, redesign the process, and then build AI into it, not the other way around.

For BFSI leadership, the question is no longer whether to use AI in customer experience.

It's whether you've done the strategic work to make it worth it.